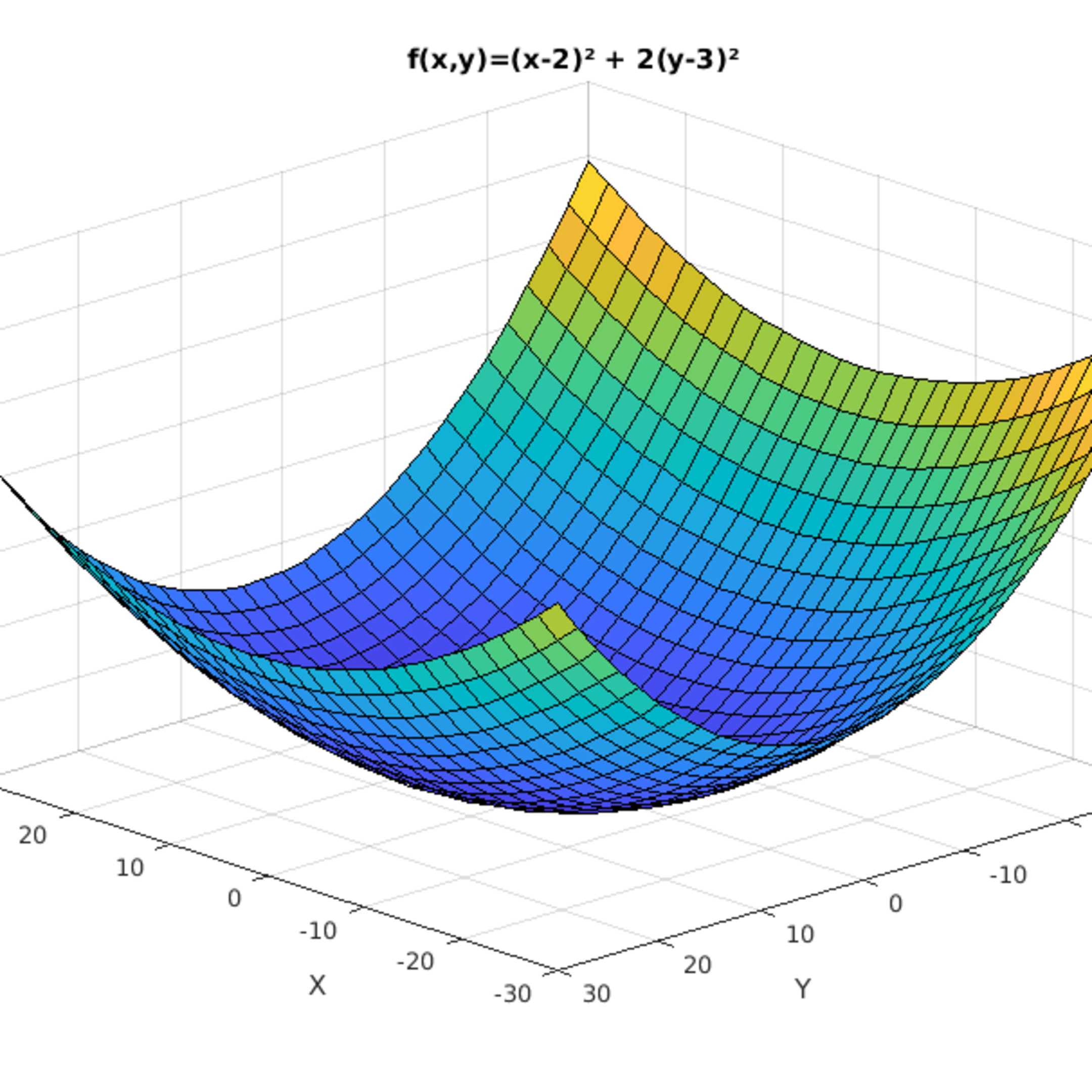

Params = params - learning_rate * params_grad Challenges Params_grad = evaluate_gradient(loss_function, batch, params) In code, instead of iterating over examples, we now iterate over mini-batches of size 50: for i in range(nb_epochs):įor batch in get_batches(data, batch_size=50): Gradient descent is a way to minimize an objective function \(J(\theta)\) parameterized by a model's parameters \(\theta \in \mathbb\) for simplicity. Finally, we will consider additional strategies that are helpful for optimizing gradient descent. We will also take a short look at algorithms and architectures to optimize gradient descent in a parallel and distributed setting. Subsequently, we will introduce the most common optimization algorithms by showing their motivation to resolve these challenges and how this leads to the derivation of their update rules. We will then briefly summarize challenges during training. We are first going to look at the different variants of gradient descent. This blog post aims at providing you with intuitions towards the behaviour of different algorithms for optimizing gradient descent that will help you put them to use.

These algorithms, however, are often used as black-box optimizers, as practical explanations of their strengths and weaknesses are hard to come by. lasagne's, caffe's, and keras' documentation). At the same time, every state-of-the-art Deep Learning library contains implementations of various algorithms to optimize gradient descent (e.g. Gradient descent is one of the most popular algorithms to perform optimization and by far the most common way to optimize neural networks. Additional strategies for optimizing SGD.Gradient descent optimization algorithms.The discussion provides some interesting pointers to related work and other techniques. Update 21.06.16: This post was posted to Hacker News. Update : Added derivations of AdaMax and Nadam. Update : Most of the content in this article is now also available as slides. Update : Added a note on recent optimizers. Note: If you are looking for a review paper, this blog post is also available as an article on arXiv. This post explores how many of the most popular gradient-based optimization algorithms actually work.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed